Main disadvantage: Data stored in S3 can only be read (SELECT only); INSERT, UPDATE, and DELETE operations are not possible. However, this test assumes that the data does not need to be modified (e.g., historical or metadata), and the focus is purely on performance and cost.

For the test, I used Aurora PostgreSQL (db.t4g.medium, Engine version 17.4). The dataset is NYC Taxi Trip data — January 2023 (Parquet format).

Ensure that the aws_s3 and postgres_fdw extensions are available (create them if missing).

In CloudShell, download the data:

curl -O https://d37ci6vzurychx.cloudfront.net/trip-data/yellow_tripdata_2023-01.parquet

Then upload it to S3:

aws s3 cp . s3://aurora-fdw-test-gm-01/data/parquet/ --recursive --exclude "*" --include "yellow_tripdata_2023-*.parquet"

There are, of course, additional setup steps that are required but not covered in detail here—such as S3 bucket creation, security group rules, IAM permissions and roles, and CloudShell-to-Aurora access configuration. The focus remains on the benchmark test itself.

The aws_s3 extension in Aurora PostgreSQL primarily supports CSV, text, and binary formats—Parquet is not directly supported. Therefore, Parquet must be converted to CSV first. Yes, I could have used pre-existing CSV files, but performing this extra step is valuable—learning something new is never a waste of time.

To convert parquet to csv Athena can be used. First create External Table for Parquet Data in Athena-

CREATE EXTERNAL TABLE nyc_taxi_parquet_2023

( VendorID BIGINT,

tpep_pickup_datetime TIMESTAMP,

tpep_dropoff_datetime TIMESTAMP,

passenger_count DOUBLE,

trip_distance DOUBLE,

PULocationID BIGINT,

DOLocationID BIGINT,

fare_amount DOUBLE,

tip_amount DOUBLE,

total_amount DOUBLE )

STORED AS PARQUET LOCATION 's3://aurora-fdw-test-gm-01/data/parquet/';

Then convert Parquet to CSV using CTAS –

CREATE TABLE nyc_taxi_csv

WITH (

format = 'TEXTFILE',

field_delimiter = ',',

external_location = 's3://aurora-fdw-test-gm-01/data/csv-uncompressed/',

write_compression = 'NONE'

)

AS SELECT * FROM nyc_taxi_parquet_2023;

Result is a file in data/csv-uncompressed – 20251130_103431_00106_crcai_2c48e1bd-0609-4f86-a067-91c8bd1e6903 (a bit of a long, system genertated name)

Now that we have our CSV file, we can begin benchmarking.

For this test, I created a new database and user in Aurora and connected via CloudShell using psql. If Aurora Serverless were used, the Query Editor could also be an option, but in this case, it is not available—Query Editor currently supports only Aurora Serverless.

Lets create our database table in Aurora

CREATE TABLE nyc_taxi_data (

vendorid BIGINT,

tpep_pickup_datetime TIMESTAMP,

tpep_dropoff_datetime TIMESTAMP,

passenger_count DOUBLE PRECISION,

trip_distance DOUBLE PRECISION,

pulocationid BIGINT,

dolocationid BIGINT,

fare_amount DOUBLE PRECISION,

tip_amount DOUBLE PRECISION,

total_amount DOUBLE PRECISION

);

and import the csv data –

SELECT aws_s3.table_import_from_s3(

'nyc_taxi_data',

'',

'(format csv, header false, null ''\N'')',

aws_commons.create_s3_uri(

'aurora-fdw-test-gm-01',

'data/csv-uncompressed/20251130_103431_00106_crcai_2c48e1bd-0609-4f86-a067-91c8bd1e6903',

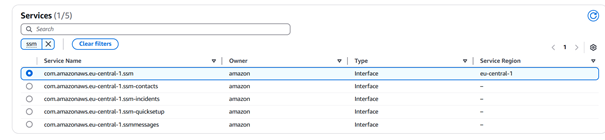

'eu-central-1'

)

);

There are 3066766 rows in nyc_taxi_data, table size is 721 MB.

Nest step is to create a foreign server pointing to the S3 location –

CREATE SERVER s3_server

FOREIGN DATA WRAPPER postgres_fdw

OPTIONS (

host '<Aurora endpoint>',

port '5432',

dbname 'nyc_tripdata_db'

);

In order to get the data from s3, we need a function to query s3 CSV data directly. Here is the code.

Now data in the DB and in s3 can be fetched like this –

SELECT COUNT(*) as local_count FROM nyc_taxi_data;

SELECT COUNT(*) as s3_count FROM query_s3_nyc_taxi();

Our benchmark infrastructure includes a table with the results (benchmark_results), timing function (benchmark_query), system monitoring function (capture_system_stats) and workload test function (run_workload_benchmark). Here is the code.

benchmark_query function measures and compares query execution times between different data access methods (Aurora direct vs S3 import approaches).

capture_system_stats function monitors Aurora resource usage (CPU, memory, I/O) during operations to calculate true costs including I/O spikes from S3 imports.

With all prerequisites in place, the benchmarking can now begin. The following tests will be done (just various sql statements )

Test 1: Simple COUNT queries

Test 2: Simple SELECT with LIMIT

Test 3: Simple aggregations

Test 4: Date range filtering

Test 5: Numeric filtering

Test 6: Multiple condition filtering

Test 7: GROUP BY with aggregations

Test 8: Complex aggregation with date grouping

Test 9: Hourly analysis

Test 10: Complex analytical query

Test 11: Window functions

Test 12: First run (cold cache) - force fresh load

Test 13: Second run (warm cache)

Test 14: Third run (warm cache)

Test 15: Complex query with cache

Test 16: Workload Simulation Tests

SQL statements are here.

And the results – Overall performance comparison

SELECT

query_type,

data_source,

COUNT(*) as test_count,

ROUND(AVG(execution_time_ms)) as avg_time_ms,

ROUND(MIN(execution_time_ms)) as min_time_ms,

ROUND(MAX(execution_time_ms)) as max_time_ms,

ROUND(STDDEV(execution_time_ms)) as stddev_ms

FROM benchmark_results

WHERE data_source IN ('local', 's3')

GROUP BY query_type, data_source

ORDER BY query_type, data_source;

| query_type |

data_source |

test_count |

avg_time_ms |

min_time_ms |

max_time_ms |

stddev_ms |

| aggregation |

local |

5 |

452 |

0 |

1279 |

543 |

| aggregation |

s3 |

5 |

1530 |

1148 |

2203 |

422 |

| cache_test |

s3 |

4 |

3190 |

1166 |

8744 |

3709 |

| complex |

local |

2 |

640 |

1 |

1279 |

904 |

| complex |

s3 |

2 |

1603 |

924 |

2281 |

960 |

| workload |

local |

5 |

0 |

0 |

0 |

0 |

| workload |

s3 |

5 |

1297 |

1246 |

1359 |

49 |

| filter |

local |

3 |

219 |

214 |

227 |

7 |

| filter |

s3 |

3 |

1317 |

1290 |

1346 |

28 |

| select |

local |

1 |

0 |

0 |

0 |

|

| select |

s3 |

1 |

243 |

243 |

243 |

|

Lets check the Performance ratio analysis

WITH performance_comparison AS (

SELECT

test_name,

query_type,

MAX(CASE WHEN data_source = 'local' THEN execution_time_ms END) as local_time,

MAX(CASE WHEN data_source = 's3' THEN execution_time_ms END) as s3_time

FROM benchmark_results

WHERE data_source IN ('local', 's3')

GROUP BY test_name, query_type

)

SELECT

test_name,

query_type,

local_time,

s3_time,

ROUND(s3_time::NUMERIC / NULLIF(local_time, 0)::NUMERIC, 2) as s3_vs_local_ratio,

CASE

WHEN local_time IS NULL THEN 'S3 Only'

WHEN s3_time IS NULL THEN 'Local Only'

WHEN s3_time < local_time THEN 'S3 Faster'

WHEN s3_time > local_time * 2 THEN 'Local Much Faster'

WHEN s3_time > local_time THEN 'Local Faster'

ELSE 'Similar Performance'

END as performance_verdict

FROM performance_comparison

ORDER BY s3_vs_local_ratio NULLS LAST;

| test_name |

query_type |

local_time |

s3_time |

s3_vs_local_ratio |

performance_verdict |

| Hourly Analysis |

aggregation |

1279 |

2203 |

1.72 |

Local Faster |

| Complex Analysis |

complex |

1279 |

2281 |

1.78 |

Local Faster |

| Daily Aggregation |

aggregation |

698 |

1628 |

2.33 |

Local Much Faster |

| Group By Vendor |

aggregation |

281 |

1456 |

5.18 |

Local Much Faster |

| Complex Filter |

filter |

227 |

1314 |

5.79 |

Local Much Faster |

| Fare Filter |

filter |

214 |

1290 |

6.03 |

Local Much Faster |

| Date Filter |

filter |

217 |

1346 |

6.20 |

Local Much Faster |

| Window Functions |

complex |

1 |

924 |

924.00 |

Local Much Faster |

| Simple AVG |

aggregation |

1 |

1216 |

1216.00 |

Local Much Faster |

| Select Limit 1000 |

select |

0 |

243 |

|

Local Much Faster |

| Simple Count |

aggregation |

0 |

1148 |

|

Local Much Faster |

| Workload Test 5 |

workload |

0 |

1359 |

|

Local Much Faster |

| Cache Test – Cold |

cache_test |

|

8744 |

|

S3 Only |

| Cache Test – Warm 1 |

cache_test |

|

1166 |

|

S3 Only |

| Cache Test – Warm 2 |

cache_test |

|

1206 |

|

S3 Only |

| Workload Test 1 |

workload |

0 |

1246 |

|

Local Much Faster |

| Workload Test 2 |

workload |

0 |

1248 |

|

Local Much Faster |

| Workload Test 3 |

workload |

0 |

1305 |

|

Local Much Faster |

| Workload Test 4 |

workload |

0 |

1326 |

|

Local Much Faster |

| Cache Complex Query |

cache_test |

|

1645 |

|

S3 Only |

— Cache effectiveness analysis

SELECT

test_name,

execution_time_ms,

LAG(execution_time_ms) OVER (ORDER BY test_timestamp) as previous_time,

CASE

WHEN LAG(execution_time_ms) OVER (ORDER BY test_timestamp) IS NOT NULL

THEN ROUND(100.0 * (1 - execution_time_ms::NUMERIC / LAG(execution_time_ms) OVER (ORDER BY test_timestamp)), 2)

ELSE NULL

END as improvement_percent

FROM benchmark_results

WHERE test_name LIKE 'Cache Test%'

ORDER BY test_timestamp;

| test_name |

execution_time_ms |

previous_time |

improvement_percent |

| Cache Test – Cold |

8744 |

|

|

| Cache Test – Warm 1 |

1166 |

8744 |

86.67 |

| Cache Test – Warm 2 |

1206 |

1166 |

-3.43 |

Now let’s try with a larger dataset.

The table size was increased (simply using INSERT INTO ... SELECT * FROM ...), and then exported to S3 as a CSV file.

Before running the next test, it is important to reset statistics and truncate the relevant tables.

select pg_stat_reset();

select pg_stat_reset_shared('bgwriter');

drop table if exists s3_nyc_taxi_cache;

truncate table benchmark_results;

The results lookk like

| query_type |

data_source |

tests |

avg_ms |

min_ms |

max_ms |

| aggregation |

local |

3 |

3612 |

1 |

10688 |

| aggregation |

s3 |

3 |

62543 |

13327 |

155211 |

| cache |

s3 |

2 |

47056 |

37977 |

56134 |

| filter |

local |

1 |

7678 |

7678 |

7678 |

| filter |

s3 |

1 |

18805 |

18805 |

18805 |

| test_name |

query_type |

local_time |

s3_time |

s3_vs_local_ratio |

performance_verdict |

| Date Filter |

filter |

10304 |

16306 |

1.58 |

Local Faster |

| Daily Aggregation |

aggregation |

7192 |

16167 |

2.25 |

Local Much Faster |

| Fare Filter |

filter |

7276 |

17033 |

2.34 |

Local Much Faster |

| Hourly Analysis |

aggregation |

6914 |

19007 |

2.75 |

Local Much Faster |

| Complex Analysis |

complex |

6259 |

18754 |

3.00 |

Local Much Faster |

| Group By Vendor |

aggregation |

4544 |

17737 |

3.90 |

Local Much Faster |

| Complex Filter |

filter |

3166 |

18048 |

5.70 |

Local Much Faster |

| Select Limit 1000 |

select |

42 |

1008 |

24.00 |

Local Much Faster |

| Window Functions |

complex |

235 |

8461 |

36.00 |

Local Much Faster |

| Workload Test 1 |

workload |

3 |

8948 |

2982.67 |

Local Much Faster |

| Workload Test 5 |

workload |

0 |

10640 |

|

Local Much Faster |

| Cache Test – Warm 1 |

cache_test |

|

12611 |

|

S3 Only |

| Workload Test 2 |

workload |

0 |

8824 |

|

Local Much Faster |

| Simple Count |

aggregation |

0 |

34849 |

|

Local Much Faster |

| Cache Complex Query |

cache_test |

|

9818 |

|

S3 Only |

| Cache Test – Warm 2 |

cache_test |

|

8427 |

|

S3 Only |

| Cache Test – Cold |

cache_test |

|

56295 |

|

S3 Only |

| Simple AVG |

aggregation |

0 |

17452 |

|

Local Much Faster |

| Workload Test 4 |

workload |

0 |

8815 |

|

Local Much Faster |

| Workload Test 3 |

workload |

0 |

9099 |

|

Local Much Faster |

No surprise — accessing data from S3 is slower, sometimes significantly slower, compared to querying data stored directly in an Aurora table.

But what about the cost aspect?

Let’s compare storage costs for the second dataset:

Aurora table: 1.3TB (1,330 GB)

S3 CSV files: 800MB (0.8 GB)

Monthly Costs for these data volumes:

| Service |

Storage Cost |

I/O Cost |

Total Monthly Cost |

Annual Cost |

| S3 Standard |

$0.018 |

$0.009 |

$0.027 |

$0.32 |

| Aurora Standard |

$133.00 |

$0.20 |

$133.20 |

$1,598 |

| Aurora I/O-Optimized |

$299.25 |

$0.00 |

$299.25 |

$3,591 |

Annual Savings:

Aurora Standard → S3: Save $1,598/year

Aurora I/O-Optimized → S3: Save $3,591/year

If the table grows significantly (e.g., 10 TB of historical data), the savings become substantial — especially in cases with multiple customers or several databases sharing similar structures and data volumes.

In general, S3 storage is approximately 4.4× cheaper than Aurora when storing the same data volume.

However, as observed in this test, the actual data stored in S3 (CSV or Parquet) is often much smaller than the equivalent data stored in an Aurora table — making the real cost advantage even greater.

Conclusion:

S3 Offloading is Financially Attractive When

Dataset > 100GB

Data is accessed infrequently (archives, historical data)

Read-heavy analytical workloads

Cost optimization is a priority vs response time for the queries

Perfect Use Cases are

Daily/weekly reports (infrequent access)

Monthly analytics (batch processing)

ETL processes (load → transform → export)

Compliance reports (quarterly/annual)

Data validation (periodic checks)

Key Challenges & Considerations for Aurora + S3 Temporary Loading Approach

Data Management Challenges:

Export scheduling – Automate daily/weekly data moves to S3

Data consistency – Ensure Aurora and S3 data sync properly

Cleanup automation – Drop temp tables after processing

Storage lifecycle – Manage S3 storage classes (Standard → IA → Glacier)

Performance Considerations:

Import time – 30-60 minutes for large datasets (plan accordingly)

Aurora I/O spikes – Temporary high I/O during S3 imports

Workload access – Handle conflicts if multiple processes need data

Query optimization – Temp tables need proper indexing

Operational Overhead:

Monitoring – Track import success/failures, processing times

Error handling – Retry logic for failed S3 imports

Alerting – Notify when reports fail or take too long

Version control – Manage function updates and deployments

Security & Compliance:

IAM permissions – Aurora → S3 access rights

Data encryption – S3 encryption at rest and in transit

Audit trails – Log data access and movements

Retention policies – How long to keep S3 data

Cost Monitoring:

S3 request costs – PUT/GET operations add up

Aurora I/O costs – Temporary spikes during imports

Data transfer costs – Aurora → S3 bandwidth charges

Storage optimization – Compress data, use efficient formats

Architecture Decisions:

Hot vs Cold data – What stays in Aurora vs moves to S3

Partitioning strategy – How to organize S3 data (by date, etc.)

Backup strategy – S3 as backup or primary archive

Disaster recovery – How to restore from S3 if needed

Bottom line: Great cost savings, but requires solid DevOps practices and monitoring to manage the complexity.

Happy clouding!